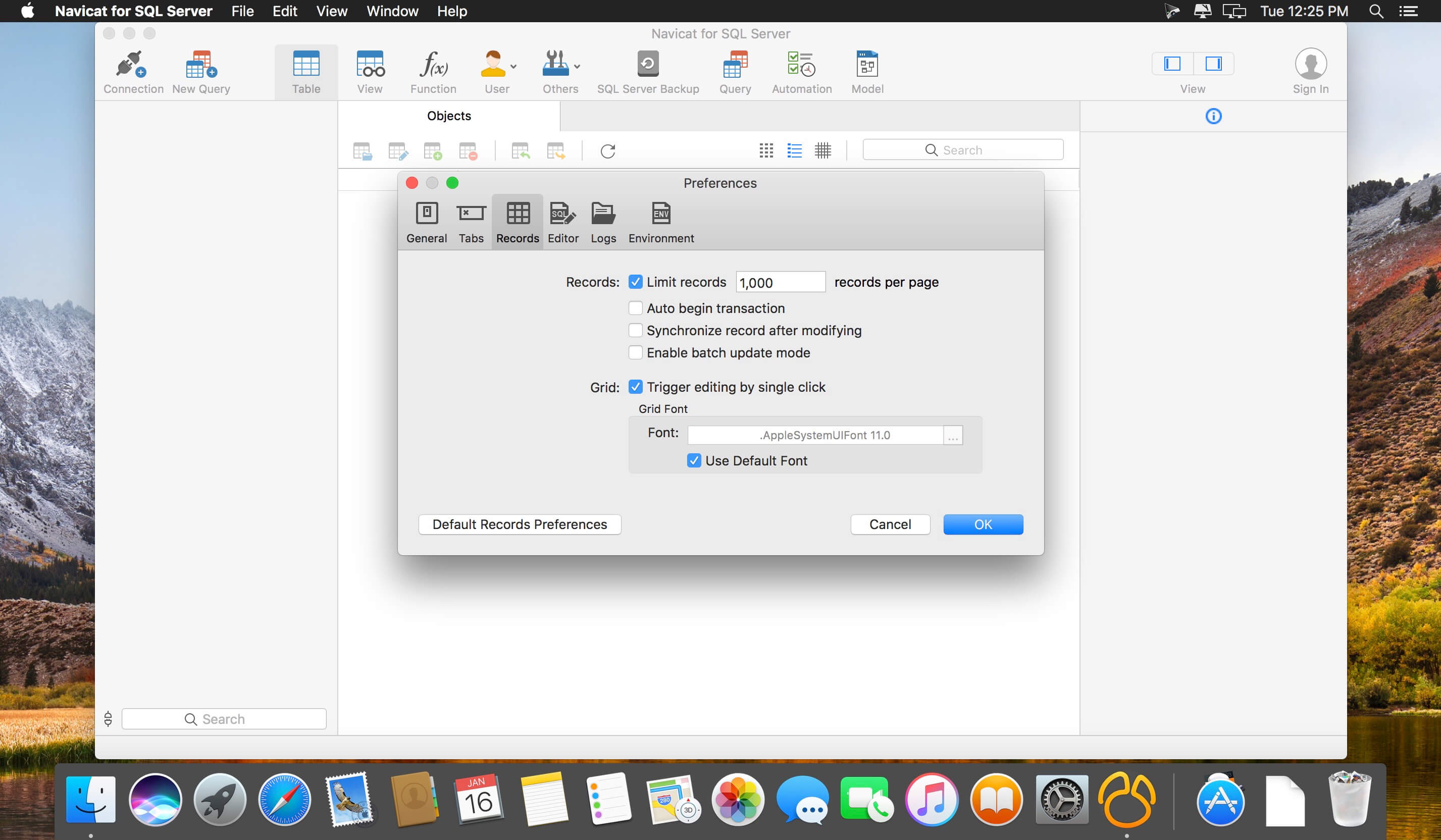

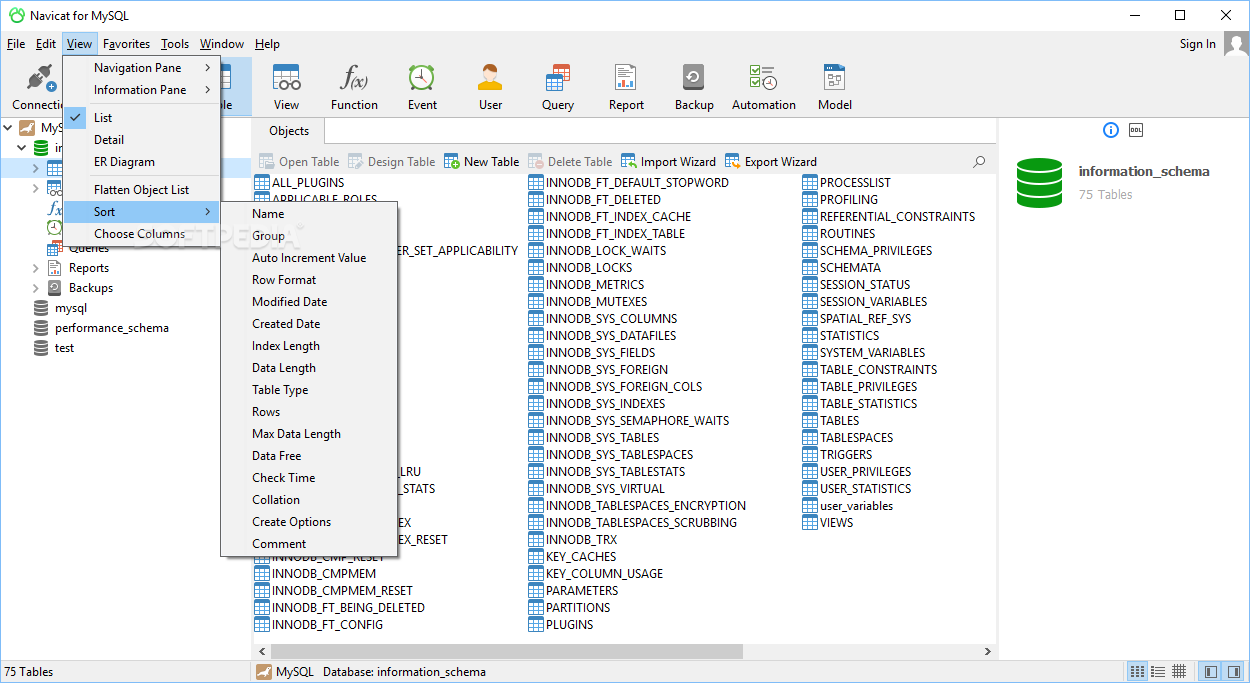

Navicat shows you the progress in real time: There is a screen for choosing the record delimiter, field delimiter, and text qualifier: It supports just about any format that you can imagine, including CSV, Excel, HTML, XML, JSON, and many other formats: Still another approach would be to use an import utility such as Navicat's Import Wizard. I would have liked to have used this method for importing data, but the files that we were importing from utilized a highly specialized and complex format that required a lot of front-end logic. In the above statement, the IGNORE 1 ROWS option is employed to ignore headers. Here's the statement for importing data from the "c:\tmp\discounts.csv" file into the discounts table: It can import a variety of delimited file formats, including commas (CSV), Tabs (TDV), and others. That's a MySQL-specific command, but most other database systems (DBMS) support something similar. LOAD DATA INFILEĪnother option, for those of you who aren't thrilled about writing scripting code, is to use something like LOAD DATA INFILE. Finally, note that 1000 is the maximum number of rows that can be inserted at one time using an INSERT statement. As long as the syntax is semantically correct, it will work just fine. While the above statement is formatted for readability, you don't have to concern yourself with that when generating the SQL dynamically. Here's a sample statement shown in Navicat for MySQL: To do that, we simply need to enclose each value list in parentheses and separate them using a comma: The INSERT statement supports several syntax variations, one of which is for inserting multiple rows at the same time. INSERT Statement Variation for Bulk Inserts In today's blog, we'll go over a few alternatives. Depending on your particular requirements, you may opt to go with a different solution.

To do that, I used the bulk insert form of the INSERT statement. Rather than process one record at a time, the script stored file contents in memory and then ran an INSERT statement every 1000 files. I recently wrote a node.js script to iterate over millions of files per day and insert their contents into a MySQL database. Three Ways to Perform Bulk Inserts by Robert Gravelle

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed